Battle of the Pollsters: 6D Edition

We take a deep dive into the polling ahead of last December's parliamentary elections and reach some unexpected conclusions about which company did best.

So, which pollster did best in approximating last year’s parliamentary election results? It’s…not that clear.

Polling is an opaque business in Venezuela. We operate far from the gringo standard where tons of polls are financed by media outlets, universities, think tanks and other bodies whose usual practice is to publish results as soon as they get them.

In Venezuela pollsters make a living selling results to a closed list of clients, who often have to sign a contract swearing up and down that they won’t share them with anyone else.

Of course, they inevitably do – well, unless you’re DATOS, somehow their polls never do. But, for everyone else, leaks are the norm. PDFs get forwarded, people gossip, Whatsapp roars.

Since disclosure is seldom open and official, the information ends up scattered, and accountability withers. Sure, you could say they’re accountable to their clients – but then their clients can’t necessarily easily compare how well their pollster did compared to all the other pollsters. To have real accountability, you have to be able to compare.

That’s why we took the time to collect the leaks and we’re here to tell you whether your favorite pollster was anywhere near the actual results of 6D.

What we did was simple: we took the three staple questions in any poll (President’s approval rating, the voting tendency, and good old situación país) and began checking if they were telling of how the election results would eventually be.

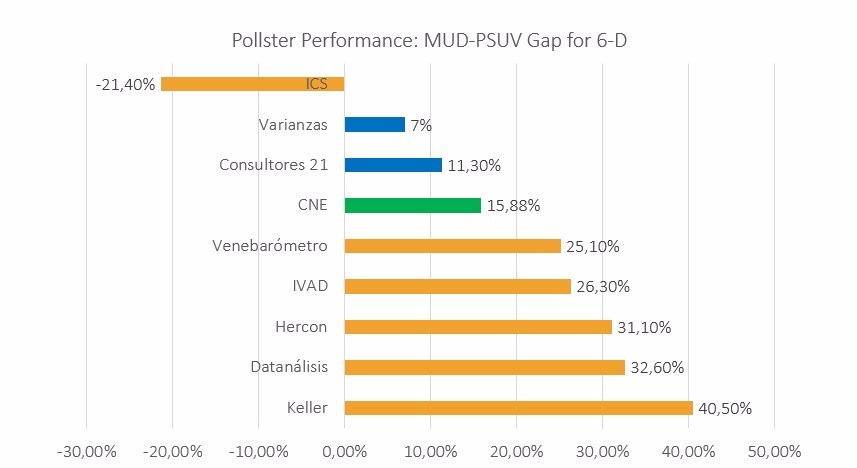

Quién la pegó? (Who was closest to the voting results?)

The first thing that strikes you about that chart is that almost everyone massively over-estimated MUD’s lead. Either the race tightened considerably right at the end, or there was something very wrong with these polls.

Rising from the ashes, Consultores 21 came on top with the closest estimate of the gap between MUD and GPP. For a pollster that was roundly pilloried for its terrible call in October 2012, when they said Capriles might just beat Chávez (and lost by 10+ points), that’s a pretty remarkable result, and one they’ve gotten precious little good press on. So let’s right that wrong right here right now: way to go C21!

They were followed by Varianzas, which came in second in spite of being barely within error margin. Everyone else was off. Way off.

It doesn’t seem timeliness had that much to do with it. C21 and Varianzas weren’t the only firms that brought out polls within a month prior to the election. Datanálisis, Keller, Delphos, Venebarómetro, among many others published one final measurement during the course of November. As a matter of fact, November was the busiest prognosis month of 2015.

Both Varianzas and Consultores 21 tend to understate MUD’s advantage – well, along with the way far-off patently-fake chavista pollster International Consulting Services (ICS). But this time their numbers did hit right on target.

Surprisingly, the often-quoted Datanalisis fell on the fringe alongside El Cafetal favorites like Keller, overestimating the gap in favor of the opposition by more than 15 percentage points. It bears noting: they got this wrong by a wider margin than C21 got it wrong in 2012!

The fact that Datanalisis stuck with three separate forecasts in the end didn’t help their case either. How hard to read can a scenario be that your answer is really three? Yet even by their most conservative of predictions, you end up with them giving the MUD a margin of at least 20 percentage points.

Who was closest to the actual number of deputies?

Given Venezuela’s mixed proportional/district based electoral system, vote shares do not translate neatly into seat shares in the AN as it became evident during the 2010 Parliamentary Elections. For this reason, both polling firms and investment banks abroad were given another chance to show their forecasting skills, now focussed on the amount of seats each electoral alliance would get.

A key point in this comparison is that some prediction models are explicitly based on a given poll (like CC’s model is based on Datanalisis’ Situacion Pais, or any of the banks’ forecasts that work with a number of polls). But since they used that information to come up with a result of their own, their conclusion is taken into account as well.

| MUD seats prediction model | Seats Range | Range Average | Poll | |

| Varianzas | 77-87 | 82 | own | |

| Morgan Stanley | 84 | 84 | own | |

| C21 | 96-104 | 100 | own | |

| Credit Suisse | 101 | 101 | open | |

| Barclays | 101 | 101 | open | |

| Bank of America | 101-111 | 106 | open | |

| Caracas Chronicles/Distortioland | 111 | 111 | Datanalisis | |

| Venebarómetro | 103-121 | 112 | several | |

| CNE | 112 | 112 | ||

F-Rod’s final analysis, Caracas Chronicles/Distortioland and Venebarómetro came out on top in this exercise, each for different reasons.

As you can see from the table above, only BofA and CC/DL were close enough to the two-thirds supermajority for the opposition in the end to call it a win for them. Which is to say a couple of things: First, everybody predicted an opposition landslide victory in terms of votes and yet almost no one dared give a 2/3 seats majority win to the opposition.

Secondly, everybody has officially lost their mind. How do you even manage to overestimate MUD’s voting share but underestimate the amount of seats said votes get you?

Anyways, It was precisely Francisco Rodríguez (Bank of America) who warned of the plausibility of this scenario, therefore deserving recognition for his insistence on how elastic the eventual victor’s vote swing would be on chavista strongholds. Nevertheless, F-Rod himself underestimated said elasticity of the MUD caucus’ vote gain by suggesting that an 18-point margin would be the necessary threshold for the opposition to gain the coveted mayoría calificada, which wasn’t the case: 15 did the job.

In the case of Caracas Chronicles/Distortioland, the model did come close to the actual results, coming short by just one deputy, which is remarkable. In addition to this, CC managed to pin it down to a single number instead of a range as most did. And yet, the model on which it’s based seemed to be at the limit of its forecasting abilities if you consider the fact that if the situación país fell to 4%, the opposition would still get the same number of seats, something that in hindsight looks rather unlikely.

The compilation of circuit-level surveys done by Edgard Gutiérrez’s Venebarómetro, like Francisco Monaldi’s predictions, did coincide with the number of seats won by the opposition although within a much wider range of outcomes, which by our book substracts merit points.

A word of caution on grading the performance in forecasting seats share is the small components that played larger roles in the election, among them, the split vote for the opposition in certain circuits, which could have changed the “magic number” one way or another. And yet, BofA and Caracas Chronicles/Distortioland were still pretty lonely on the 101-plus range among forecast models.

Polling isn’t guessing. It’s asking questions to come up with a good prediction of what might happen. On doing this performance review, we realised how foreign this concept seems to be to most pollsters out there.

We still don’t know why so much of the polling overstated MUD’s lead by as much as it did. But pollsters should be held accountable for that, for surew.

It’s true, in the end they answer to those who pay the piper. But their work always ends up being quoted by voters and the media alike. They’re not selling pies. They sell information that can in fact condition the way voters sway. A little accountability in this business shouldn’t be too much to ask for, but somehow it is. If not for the sake of the public, at least for the sake of those paying.

Considerations and other details for our analysis:

- We only considered polls whose field work was done within a month of election days.

- Given the tremendously high number of undecided voters on most polls, we adjusted all calculations to a 100% for those with a declared preference between the government and the opposition. The only exception is the 2010 Parliamentary elections, where PPT is considered as a third option.

- Given that we are adjusting to a 100% voters’ preferences in polls, we equally adjusted CNE results so that both to remain comparable.

- In cases where a polling firm presents more than one scenario, we presented the intermediate one.

- For the parliamentary seats forecasting models, we applied each model to the actual CNE results from 6-D in order to evaluate their performance with the actual results. Nevertheless, some of these models do not disclose their forecasts for different vote shares, in which case we present their estimations based on their own vote share scenarios (as was the case for: Varianzas, Morgan Stanley, Consultores 21, and Caracas Chronicles).

- In the cases where seats forecasting models presented a range of seats for their estimation, we showed the average of said range (such was the case of: Varianzas, Consultores 21, Bank of America, and the aggregate analysis of electoral district polls).

Caracas Chronicles is 100% reader-supported.

We’ve been able to hang on for 22 years in one of the craziest media landscapes in the world. We’ve seen different media outlets in Venezuela (and abroad) closing shop, something we’re looking to avoid at all costs. Your collaboration goes a long way in helping us weather the storm.

Donate