How to Count Our Dead

The Venezuelan Violence Observatory (OVV) publishes the country's most cited figures on violence. Here's why those figures are very wrong — and why you should cite our estimate instead.

Este texto está publicado en español en Prodavinci.

To measure violence the dead must first become data. Pen in hand, a clerk completes a death certificate: the name of the deceased, her age. Where she lived, where she died. Whether she succumbed to natural forces or unnatural ones. All the relevant details, written in blue or black ink. The certificates are then collated, counted, the pen strokes converted to keystrokes, and the causes of death coded according to an international system.

This would seem to provide a straightforward way to gauge the rise or fall of lethal violence in Venezuela: simply tally the number of fatal shootings and stabbings and stranglings, and compare this year’s total to earlier ones. Yet in practice, processing the reams of paper death certificates takes years. Doctors and other health workers filled out 149,883 certificates in 2013 alone.

So those looking for up-to-date violence measures have no choice but to rely on estimates. After learning about the official death registries, and after consulting with colleagues at various universities in Venezuela, I estimated the 2015 violent death rate at about 70 per 100,000 people.

That’s high—among the highest in the world.

But the most widely cited estimate is much higher still. The Venezuelan Violence Observatory (OVV), estimates 90 violent deaths per 100,000 people in 2015.

That’s a difference of more than 6,000 victims, enough to fill every seat in the Ríos Reyna hall at the Teresa Carreño—twice over.

This discrepancy made me wonder. How could we take the same data, the same deaths, the same death certificates, and arrive at such different conclusions? Did 6,000 victims go missing in my analysis? Or did OVV’s researchers create them?

I called OVV to find out.

The mechanics of body counting

For researchers, there are two main sources of data on lethal violence in Venezuela: the Ministry of Health and the police.

The Ministry of Health creates Venezuela’s national death registry, based on all those paper death certificates. What makes this registry useful for measuring violence is that causes of death are coded according to the International Classification of Diseases.

The death registry isn’t perfect. But the World Bank estimates that that it covers 97% of deaths. And while some causes of death might be tricky to code—was pneumonia the culprit, or was it heart failure?—bullet wounds are not.

The Ministry of Health makes the death registry available online, together with a 96-page document describing how the data are collected and processed. For researchers, that’s especially useful.

The biggest problem with the Ministry of Health data is the wait. Creating, checking, and finalizing the death registry takes years. Venezuela’s Ministry of Health is somewhat slower than its Latin American counterparts; while the most recent data we have is from 2013, Argentina and Mexico have already published data for 2014, and Colombia put out preliminary figures for 2015. Still, few countries publish these data right away, and with good reason: ensuring the quality of such a large, complex data set doesn’t happen overnight.

Perhaps, come next January, we’ll have the Ministry of Health data for 2014. But not everyone can wait three years to learn about changes in Venezuela’s security situation. For policymakers, businesspeople and journalists, real-time estimates are important.

Fortunately, the national investigative police, CICPC, also keep relevant records, and they work a bit faster. CICPC tracks the number of cases related to homicides, police violence, and deaths from external causes—e.g., bullets—that may or may not have been intentional.

The number of cases is not at all the same as the number of deaths, so researchers treat them differently. For one thing, there are many cases of police violence in which no one dies; for another, one homicide case could involve multiple victims, or vice versa. But even if the number of cases doesn’t tell us the number of violent deaths, the trend in number of cases tells us something about the trend in violent deaths.

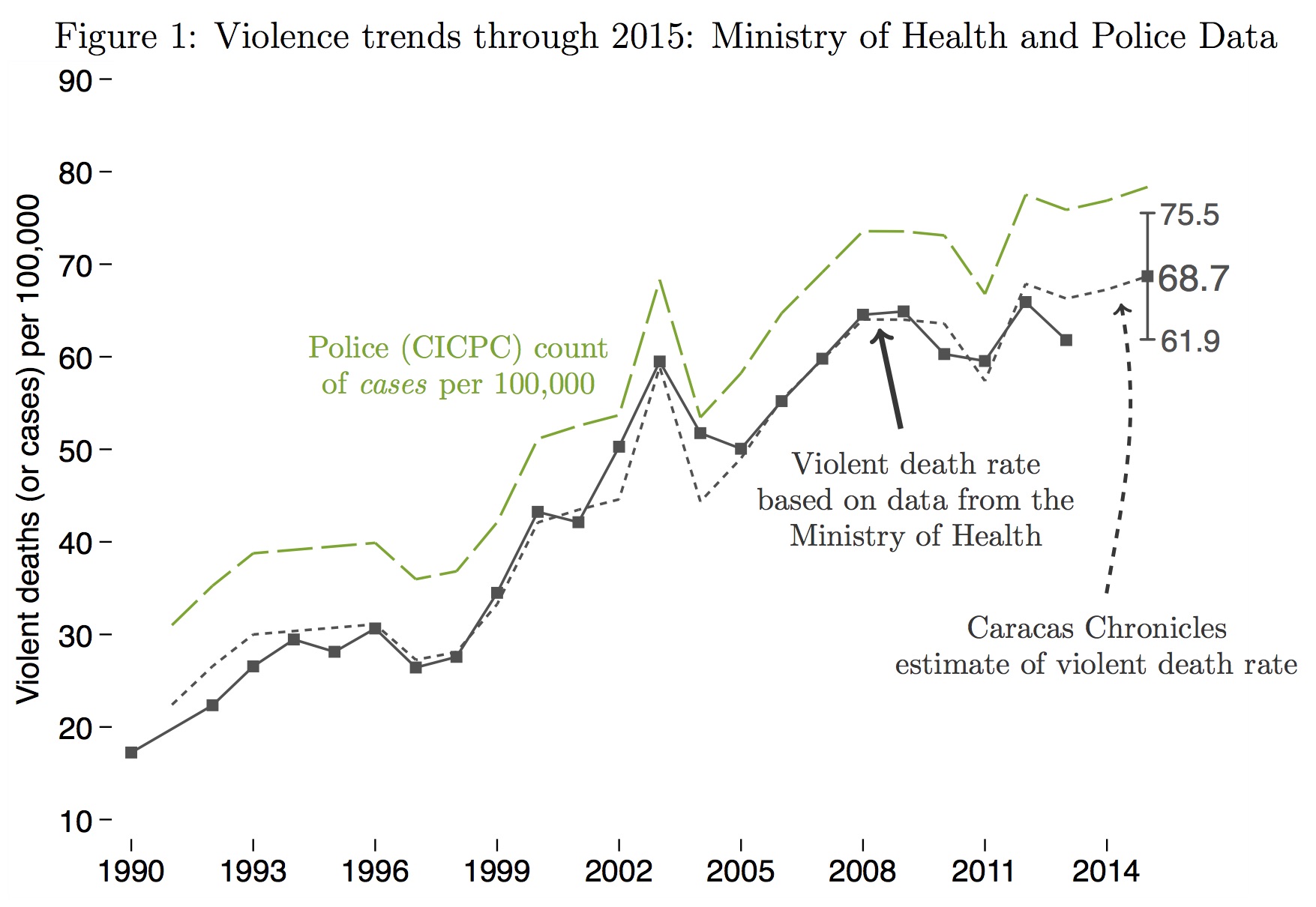

To see this, check out Figure 1.

The gray solid line plots the number of violent deaths recorded by the Ministry of Health. By violent deaths, I mean deaths coded as homicides, as well as “violent deaths of unknown intent”—in other words, violent deaths in which it was unclear, at the time the death certificate was filled out, whether the violence was intentional or accidental. (For a complete list of ICD codes included in this measure, see the notes at the end of this article.) The green dashed line in Figure 1 plots the number of cases recorded by the police (that is, the number of homicide cases, police violence cases, and violent-death-of-unknown-intent cases all added together).

The gray solid line plots the number of violent deaths recorded by the Ministry of Health. By violent deaths, I mean deaths coded as homicides, as well as “violent deaths of unknown intent”—in other words, violent deaths in which it was unclear, at the time the death certificate was filled out, whether the violence was intentional or accidental. (For a complete list of ICD codes included in this measure, see the notes at the end of this article.) The green dashed line in Figure 1 plots the number of cases recorded by the police (that is, the number of homicide cases, police violence cases, and violent-death-of-unknown-intent cases all added together).

Clearly, the police (CICPC) register more cases than the Ministry of Health registers violent deaths—in part because many police violence cases don’t leave a dead body behind. But the important point is that, historically, these two trends have moved together: When the Ministry of Health count of violent deaths goes up or down, the police count of cases goes with it.

The Ministry of Health numbers (the gray solid line) only take us to—remember the lag—2013. The police numbers (the green dashed line) continue through 2015. And while the CICPC doesn’t publish its data, it does grant access to some researchers and non-governmental organizations—including a source close to Caracas Chronicles, a source we trust, who understands how the police figures are constructed and what they mean.

Our estimate

In our view, then, a worthwhile way to estimate the number of violent deaths in 2015—and, early next year, to estimate number of violent deaths in 2016—is to use the trend in the police data to predict what the Ministry of Health series will eventually show.

This exercise essentially says: the Ministry of Health creates Venezuela’s most reliable count for the number of violent deaths. This count is only available after a two-year delay. But we can use the trend in the police data to make an educated guess about whether the number of violent deaths increased or decreased over the past two years, and by how much. We can also use the data to say something about the precision of our estimate: how confident are we that we’re right?

Following this strategy, Caracas Chronicles estimates that the violent death rate landed between 62 and 75 per 100,000 in 2015. In other words, (62, 75) is our 95% confidence interval for the 2015 violent death rate. (For more details, read the technical notes at the end of the article.)

Just as important as this interval, our analysis tells us something about the trend in violent deaths. We estimate that the violent death rate rose less than 5% since 2012. That’s a significant increase, though it’s small compared to the 20%–25% annual jumps of the early 1990s or early 2000s.

How good is this new Caracas Chronicles estimate? One way to evaluate it is to consider the plausibility of the underlying assumptions.

Perhaps the most important of these assumptions is that, in 2014 and 2015, the number of violent deaths continued to move together with the number of police cases. Since these two figures tracked each other for over twenty years leading up to 2013, this strikes us as a reasonable assumption. If you have reason to think otherwise, then you shouldn’t put much stock in our estimate (and you should let us know why in the comments).

Another way to evaluate our estimate is to compare it with others. In recent years, CICPC started keeping its own count of violent deaths, alongside its count of cases. For 2015, CICPC’s own count put the violent death rate at 73.5 per 100,000—within our confidence interval of (62, 75).

This reassured us that our estimate wasn’t wildly off the mark. But we still had a lingering doubt. If the true violent death rate really were on the order of 70 per 100,000, and if it increased less than 5% since 2012, how on earth did the Venezuelan Violence Observatory (OVV) get an estimate of 90 per 100,000, with a 28% increase over the same period?

The source of the discrepancy

For many years, OVV researchers had access to the leaked police data on the number of cases — the same police data that Caracas Chronicles obtained from a different source, and that we discussed above.

Back when OVV had access to these data, their job was pretty straightforward. They estimated the number of violent deaths by adding the number of homicide cases, 60% of the number of police violence cases (to account for the fact that there are many police violence cases in which no one dies), and 95% of external-deaths-of-unknown-intent cases (for details on the origins of this formula, see the notes at the end of this article). When their data wasn’t quite up-to-date, they used forecasts. (A few years ago, we criticized what we saw as methodological opacity in the OVV press releases; subsequent press releases included much more detail.)

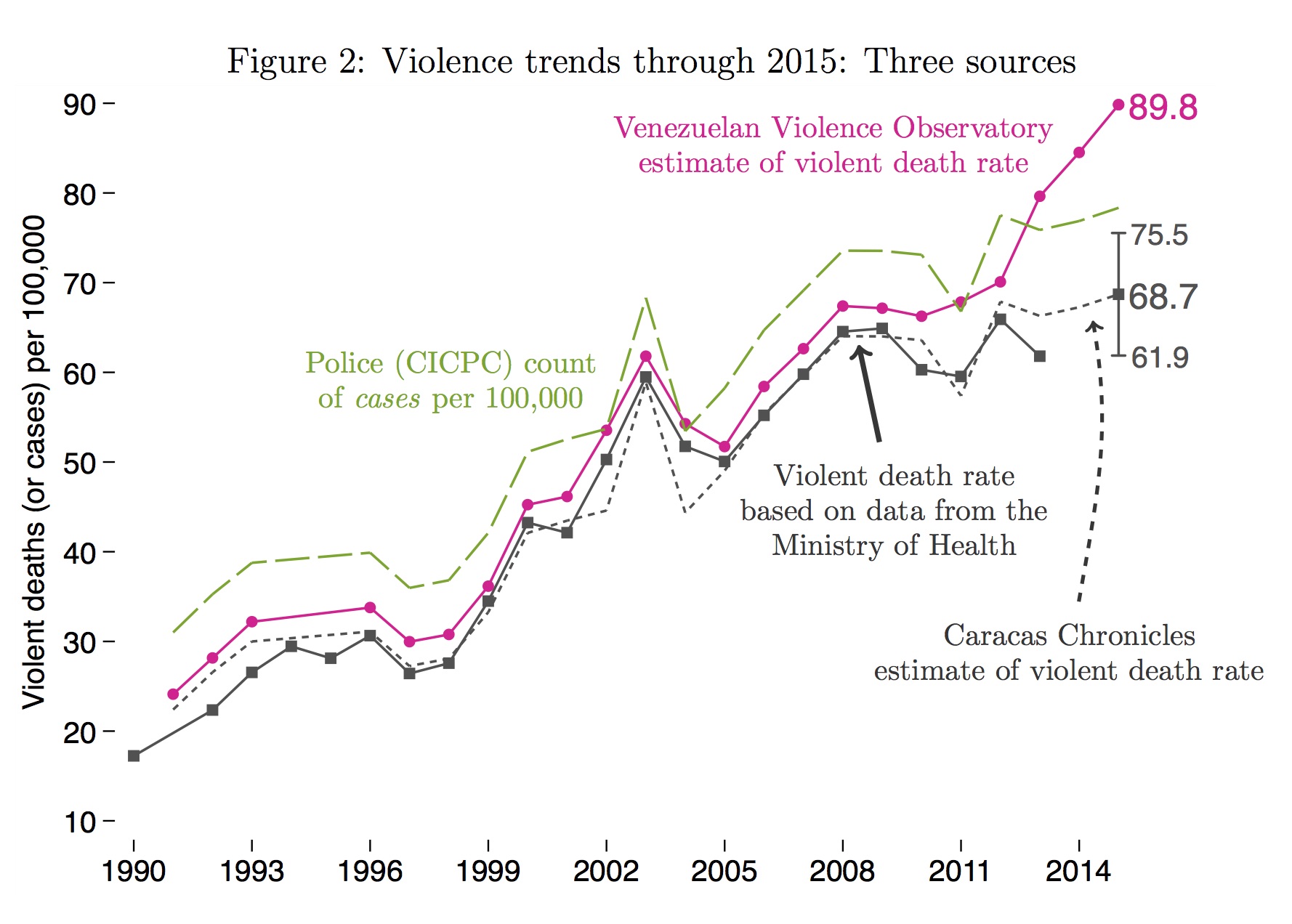

Was this method as precise as the CICPC’s recent effort to count the number of violent deaths? No. But it got the OVV a total similar to the Ministry of Health’s count. Check out Figure 2. OVV’s data are plotted in pink, on top of the Ministry of Health (grey) and police figures (in green). You can see that, until 2012, the OVV’s estimate tracks the other two measures pretty closely (for notes on deviations pre-2012, see the technical appendix).

After 2012, though, things get interesting. In 2013, the OVV data register a major increase, while the Ministry of Health count and police data point to a decrease. Curious.

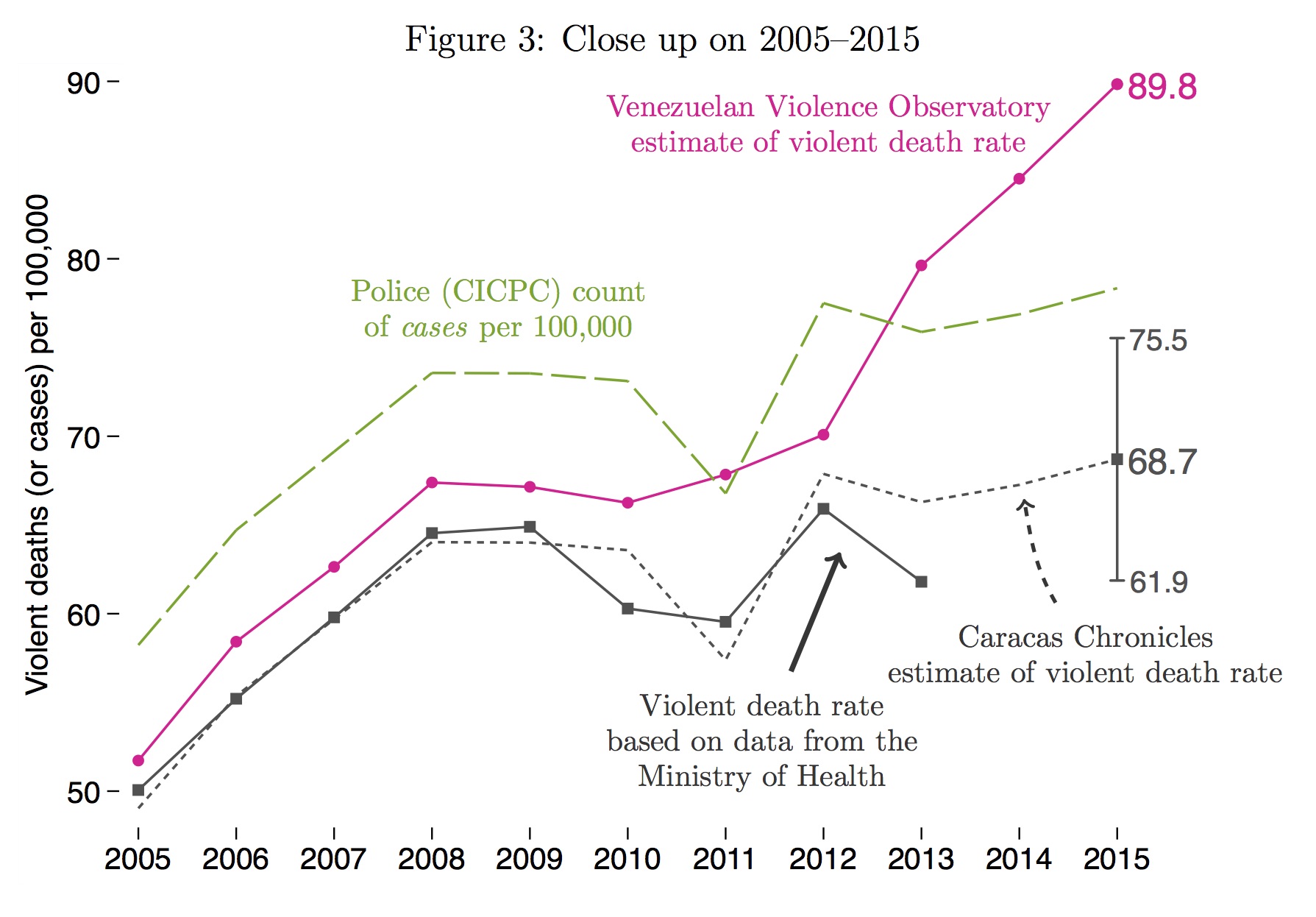

Even more curious is what happens in 2014 and 2015: the number of police cases pretty much levels off, while the OVV numbers keep climbing. See Figure 3 for a close-up on the most recent ten years.

For me, that discrepancy set off alarm bells.

See that?

Maybe it’s clearer if we zoom in on just 2005-2015:

OVV’s lead statistician, Alberto Camardiel, shared with us his data and calculations. As it turns out, the 2014 and 2015 figures are projections of the trend through 2013—meaning that, if the violent death rate went up in 2013, the next two years would likely follow suit.

OVV’s lead statistician, Alberto Camardiel, shared with us his data and calculations. As it turns out, the 2014 and 2015 figures are projections of the trend through 2013—meaning that, if the violent death rate went up in 2013, the next two years would likely follow suit.

But the 2013 number in Camardiel’s spreadsheet isn’t a projection. It’s a data point. And since the OVV’s original source for leaked police figures had cut them off, the 2013 number came from someone else: Deivis Ramírez, a seasoned sucesos journalist who reported the number in a press article. (Camardiel tried to contact Ramírez last year and was unsuccessful.)

Sounds reasonable, right?

As it turns out, no. The problem, as I found out when I called Ramírez, was that the 2013 number he provided just wasn’t comparable to the numbers OVV had for 2012 and previous years: Ramírez’s number and OVV’s earlier numbers measure different things. Combining them accounts for most of the disparity between OVV’s skyrocketing numbers and the estimates based on official data. (For what accounts for the rest of the disparity, see the endnotes).

How exactly are Ramírez’s numbers different from OVV’s historical series?

Both Ramírez’s data and OVV’s were counts of police cases. But which cases, exactly? The police keep tallies of three types of cases: homicide, police violence, and death from external causes in which the police aren’t sure whether the injury was intentional or accidental.

The OVV interpreted Deivis Ramírez’s number as a count of homicide cases only. So, following their previous practice, they took Ramírez’s total for 2013 and then added to it 60% of police violence cases and 95% of external-cause-unknown-intent cases. (For these latter categories, they used projections based on earlier years.)

But as Ramírez later confirmed to Caracas Chronicles, the 2013 number he reported to OVV wasn’t just homicide cases—it was the sum of homicide cases and police violence cases (but not external-cause-unknown-intent deaths).

That means that OVV’s 2013 estimate of violent deaths double-counted police violence. Effectively, instead of A+B+C, they added A+B+B+C. The mistake created the false impression of a big uptick in violent deaths in 2013, relative to 2012.

In fact, when Caracas Chronicles asked Deivis Ramírez for his numbers for all available years, we found that, when compared to an analogous figure for 2012, Ramírez’s data reveal a decrease in violent deaths in 2013 — just like the Ministry of Health data, and just like the police data from our source.

Let me put that point another way. When I asked OVV why they thought that violent deaths increased in 2013, they pointed me to Deivis Ramírez. But a conversation with Ramírez confirmed that his numbers, like ours from other sources, don’t indicate rising violence in 2013. Ramírez’s numbers show the opposite.

Drawing a line from OVV’s 2012 figure through Ramírez’s 2013 figure was a mistake. And because OVV moved to extrapolation in 2014 and 2015, it was a mistake amplified by time.

The wrong source for research

When we shared a draft of this article with OVV, Alberto Camardiel confirmed that “the article’s description of the process OVV used to estimate the violent death rate corresponds with what we did.” He also noted that Ramírez’s original press article referred to the fateful 2013 figure simply as a count of “homicides,” failing to specify that the figure included both homicide cases and police violence cases. This is true; Ramírez, a journalist, perhaps had in mind the colloquial meaning of “homicide” (which could reasonably include police killings) rather than the technical definition employed by CICPC.

That ambiguity also characterizes various public statements from high-ranking government officials. In early 2013, for example, Nestor Reveról (who was then Interior Minister) told the press that there were 14,092 homicides in 2011 and 16,072 in 2012. This year, Fiscal Luisa Ortega Díaz claimed a 2015 homicide rate of 58 per 100,000. These numbers suffer from the imprecision of Ramírez’s original article: what did Reveról or Díaz mean by “homicide”? Did they mean homicide cases? Homicide victims? Victims of homicide and of police killings, added together? Or something else entirely? Without metadata, these kinds of statements don’t mean much to a researcher.

There were other reasons, too, not to use Ramírez’s reporting as the basis for an estimate of the violent death rate. His original article cited two different but remarkably similar homicide figures for 2013: one was 19,762, the other 17,962, leading the human rights organization PROVEA to conclude that “it was probably an involuntary inversion on the part of the journalist, and that the correct figure is [17,962]” (OVV, also, used this lower number).

Adding to the confusion, Ramírez’s article attributes the 17,962 number to the consulting firm ODH, while Ramírez reported to me via email that this figure came from his contact within the CICPC. Meanwhile, Alberto Camardiel of OVV was under the impression that Ramírez had obtained the same number from a report by the Ministry of Interior and Justice.

When Ramírez sent me his data for the period 1999–2015, I compared it to the CICPC data obtained through our source and found that the series don’t match. After long conversations with both Ramírez and with our source, I decided to disregard Ramírez’s data: among other problems, he seemed confused himself about what the data really measured, providing conflicting definitions in different exchanges. Strangely, Alberto Camardiel of OVV appeared to share my concerns. When I first asked him about Ramírez, he said that “[he could] neither confirm nor deny whether Ramírez was a reliable source.”

All of this strikes me as a good reason not to create estimates based on numbers from news articles or press conferences (at least, not without additional information). Ideally, the CICPC and other government agencies would publish official data, together with a description of how those numbers were generated (as the Ministry of Health does); if they did, I wouldn’t be writing this article. Since they don’t, I based my estimate on the two sources I trust: the Ministry of Health’s vital statistics data, and the CICPC’s count of cases. Certainly, I may have made a mistake in my calculations (in which case, I hope that this publication will lead someone to correct me). But I don’t share OVV director Roberto Briceño-León’s view that, as he wrote in an email, “it’s very hard, not to say impossible, to have a solid, substantiated opinion about the reliability of the data.”

Towards 2017

Ultimately, this is a debate over the dimensions of the tragedy in Venezuela, not over its existence. While OVV tells the more dramatic tale, with each year surpassing the last as the most violent in the country’s history, even our estimates reveal a devastating level of violence, with rates five times higher than those of a generation ago.

Addressing this tragedy demands an understanding of its scale, which in turn requires an accurate tally of the dead. The first months of 2016 are already rumored to have swallowed more lives than the same months last year; next January, when the country looks back and takes stock, how will we know for sure? How will we count the victims of lethal violence in 2016? And without properly counting the murders, how will we gauge policies designed to stop them?

We are not the first to stress the virtues of precisely measuring the problems we seek to solve. In the 1990s, after a decade of research on Venezuelan public health, OVV director Roberto Briceño-León turned his attention to violence. In one of his first articles on the subject, he presented graphs of changes in the homicide rate since the late 1970s. “Only with a thorough understanding of how much [violence] there is,” he concluded, “will it be possible for society to take the complex actions needed to try to prevent and avoid it.”

On that point, we are in complete agreement.

Technical notes

- The ICD codes included in the Ministry of Health violent death count are: W32, W33, W34, X85–Y09, Y10–Y34, Y35–Y36, Y89. Descriptions of each category are available here. Note that the violent death rate is conceptually distinct from the homicide rate; the former includes, for example, accidental firearm deaths (e.g., from stray bullets), while the latter does not. Thus, the numbers in this article should not be compared to homicide rates from other countries. Our violent death count does not include suicides or motor vehicle deaths.

- The Caracas Chronicles estimate was created simply by regressing the Ministry of Health violent death rate on the CICPC cases rate (i.e., number of cases per 100,000 population) and then taking the predicted value. We included all homicide cases, violent deaths of unknown intent cases, and police violence cases.

- To calculate the 95% “confidence interval,” we used the forecast standard error rather than the prediction standard error. See Section 11.3.5 here; we used what this text calls a “prediction interval.”

- A note about the formula that OVV uses to calculate the violent death rate. The text of the article states that OVV uses the formula: 1*(Homicide Cases) + 0.6*(Police Violence Cases) + 0.95*(Violent-Death-Unknown-Intent Cases). In fact, this was the formula that OVV intended to apply; in practice, a spreadsheet error meant that they actually used the formula 1*(Homicide Cases) + 0.95*(Police Violence Cases) + 0.6*(Violent-Death-Unknown-Intent Cases), inadvertently inverting the weights on police violence cases and violent-death-of-unknown-intent cases. In our article, we used the formula that OVV actually used (that is, with weights inverted) rather than the corrected formula. In recent years, the number of police violence cases and unknown-intent cases have been similar enough that the correction changes OVV’s estimate only slightly (making it 91.5 instead of 89.8 per 100,000).

- The text states that the mistaken use of Ramírez’s 2013 number accounts for most of the difference between OVV’s estimate and ours. Two other factors contribute.

- First, as explained in their press release, OVV modeled the homicide cases time series using an Arima(0,1,0) model and then used that model to create projections for 2014 and 2015. The software they use, SPSS, has two different commands for producing the projection; strangely, these two commands produce different output, and the one OVV used makes projections about 10% higher than the other command (in this specific case, for the year 2015). The other command (which produces the lower projection for 2015) matches the output from analogous commands in Stata.

- Second, recall that CICPC’s own count of violent deaths, based on their data, put the 2015 violent death rate at 73.5 per 100,000—higher than our estimate of 68.7. This suggests that, OVV’s methods aside, CICPC’s count of violent deaths is higher than that of the Ministry of Health. (This kind of discrepancy is not uncommon.)

- The text also notes that OVV’s violent death rate estimates follow a different trend than the CICPC data even before 2013: specifically, in 2010 and 2011. This is simply because OVV was using an older version of the CICPC data for those years (the same older version that has been published in PROVEA reports); if OVV had had access to the revised data, the trends would match for 2010 and 2011.

Caracas Chronicles is 100% reader-supported.

We’ve been able to hang on for 21 years in one of the craziest media landscapes in the world. We’ve seen different media outlets in Venezuela (and abroad) closing shop, something we’re looking to avoid at all costs. Your collaboration goes a long way in helping us weather the storm.

Donate